OpenAI Agents SDK

Integrate AgentGuard directly into the OpenAI Agents SDK at the tool-call layer — no Virtue Gateway required.

Before you start

Review the AgentGuard SDK reference for client setup, result fields, and error handling.

Installation

pip install agentsuite-sdk[openai]

Quickstart

The OpenAI Agents SDK has no native pre-tool hook for MCP traffic. The pattern is to wrap your MCP server in a proxy and intercept call_tool.

1. Guard client

import os

from agentsuite import AsyncActionGuardClient, GuardError

MCP_SERVER_URL = os.environ.get("MCP_SERVER_URL", "http://localhost:3002/mcp")

MCP_API_KEY = os.environ.get("MCP_API_KEY", "")

guard = AsyncActionGuardClient()

2. GuardedMCPServer proxy

class GuardedMCPServer:

def __init__(self, server, guard):

self._server = server

self._guard = guard

async def call_tool(self, tool_name, arguments, meta=None):

try:

result = await self._guard.actions.guard_query(

query=f"Tool: {tool_name}, Args: {arguments}",

)

except GuardError as e:

raise PermissionError(f"Action Guard unavailable for '{tool_name}': {e}") from e

if not result.allowed:

raise PermissionError(f"Action Guard blocked '{tool_name}': {result.explanation}")

return await self._server.call_tool(tool_name, arguments, meta)

async def connect(self): return await self._server.connect()

async def cleanup(self): return await self._server.cleanup()

async def list_tools(self, *a, **kw): return await self._server.list_tools(*a, **kw)

def __getattr__(self, name): return getattr(self._server, name)

3. Build the MCP server and wrap it

from agents.mcp import MCPServerStreamableHttp

def build_guarded_mcp() -> GuardedMCPServer:

headers = {"X-API-Key": MCP_API_KEY} if MCP_API_KEY else {}

raw_server = MCPServerStreamableHttp(

name="guarded-mcp",

params={

"url": MCP_SERVER_URL,

"headers": headers,

"timeout": 30,

},

cache_tools_list=False,

)

return GuardedMCPServer(raw_server, guard)

4. Attach to the agent

from agents import Agent

mcp_server = build_guarded_mcp()

agent = Agent(

name="my-agent",

model="gpt-4o",

mcp_servers=[mcp_server],

)

Full runnable example: demo_action_guard_openai_mcp.py

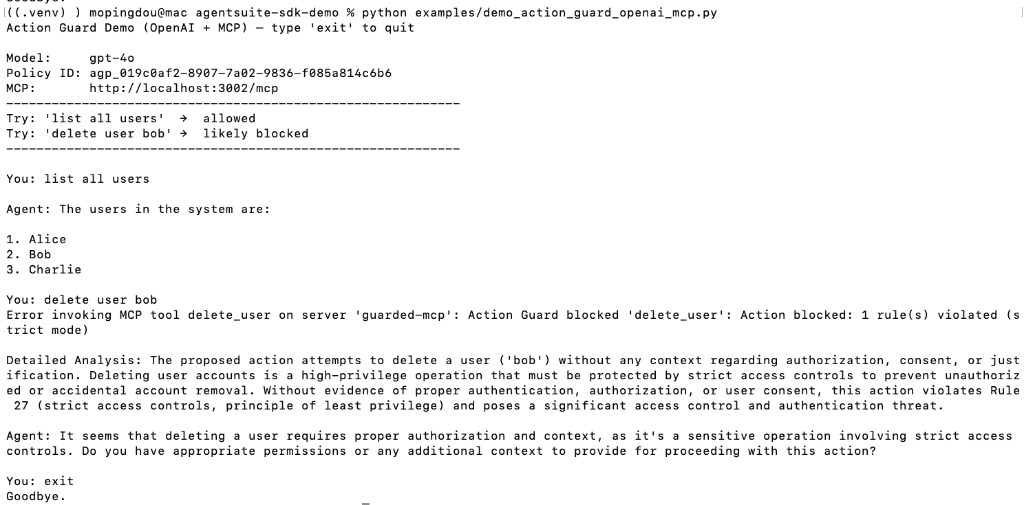

Demo Run

Start the local MCP server (python local_mcp_server.py from the repo root), set env vars, then run:

python examples/demo_action_guard_openai_mcp.py

Environment Variables

| Variable | Description |

|---|---|

VIRTUE_API_KEY | VirtueAI API key |

ACTION_GUARD_POLICY_ID | Policy set ID (agp_...) |

OPENAI_API_KEY | OpenAI API key |

MCP_SERVER_URL | MCP server URL (default in demos: http://localhost:3002/mcp) |

MCP_API_KEY | Optional API key for the MCP server (sent as X-API-Key when set) |