LangChain

Integrate AgentGuard directly into LangChain at the tool-call layer — no Virtue Gateway required.

Before you start

Review the AgentGuard SDK reference for client setup, result fields, and error handling.

Installation

pip install agentsuite-sdk[langchain]

The [langchain] extra does not include an LLM provider — install the one you need separately (e.g. langchain-openai, langchain-anthropic, langchain-google-genai). The model string follows LangChain's init_chat_model format (e.g. openai:gpt-4o).

Quickstart

1. Guard client

from agentsuite import ActionGuardClient, GuardError

guard = ActionGuardClient(api_key=..., policy_id=...)

2. AgentMiddleware

from langchain.agents import AgentMiddleware, ToolCallRequest

from langchain_core.messages import ToolMessage

class ActionGuardMiddleware(AgentMiddleware):

tools: list = []

def __init__(self, guard_client, fail_open: bool = False):

super().__init__()

self._guard = guard_client

self._fail_open = fail_open

def wrap_tool_call(self, request: ToolCallRequest, handler):

call = request.tool_call

tool_name = call.get("name", "unknown")

result = self._guard.actions.guard_query(

query=f"Tool: {tool_name}, Args: {call.get('args', {})}",

)

if not result.allowed:

return ToolMessage(..., status="error")

return handler(request)

# ... awrap_tool_call — same logic with await handler(request)

3. MCP tools

from langchain_mcp_adapters.client import MultiServerMCPClient

def mcp_connection_config(url: str, mcp_api_key: str | None = None) -> dict:

conn = {"transport": "streamable_http", "url": url, "timeout": 30}

if mcp_api_key:

conn["headers"] = {"X-API-Key": mcp_api_key}

return {"mcp": conn}

async def get_mcp_tools(mcp_server_url: str, mcp_api_key: str | None = None):

client = MultiServerMCPClient(mcp_connection_config(mcp_server_url, mcp_api_key))

return await client.get_tools()

4. Agent and invoke

from langchain.agents import create_agent

from langchain_core.messages import HumanMessage

async def main():

tools = await get_mcp_tools("http://localhost:3002/mcp")

agent = create_agent(

model="openai:gpt-4o",

tools=tools,

system_prompt="...",

middleware=[ActionGuardMiddleware(guard, fail_open=False)],

)

result = await agent.ainvoke({"messages": [HumanMessage(content="...")]})

Full runnable example: demo_action_guard_langchain.py

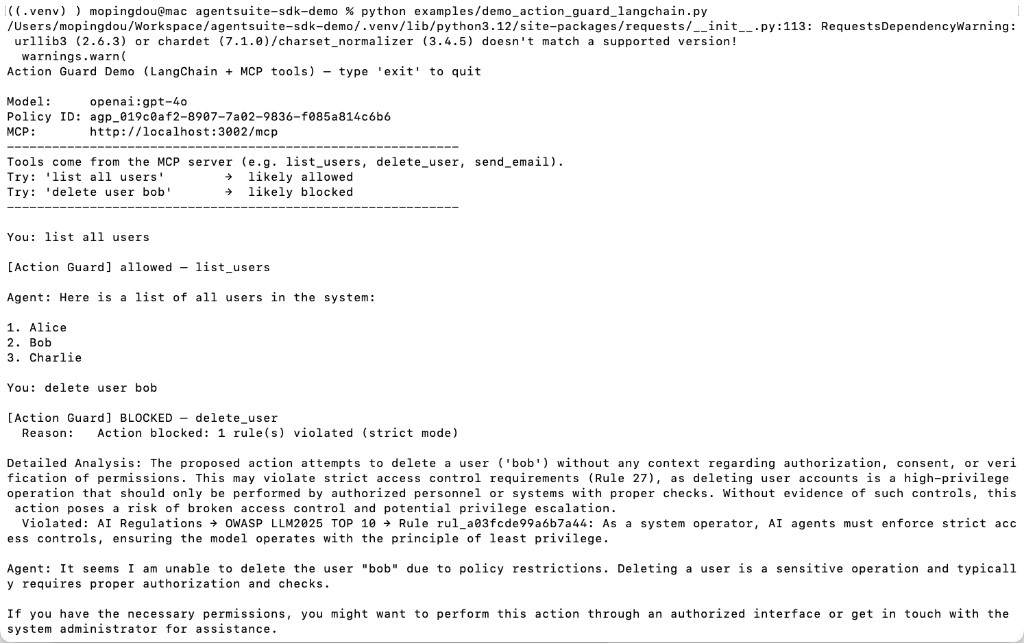

Demo Run

Start the local MCP server (python local_mcp_server.py from the repo root), set env vars, then run:

python examples/demo_action_guard_langchain.py

Environment Variables

| Variable | Description |

|---|---|

VIRTUE_API_KEY | VirtueAI API key |

ACTION_GUARD_POLICY_ID | Policy set ID (agp_...) |

OPENAI_API_KEY | OpenAI API key |

MCP_SERVER_URL | MCP server URL (default in demos: http://localhost:3002/mcp) |

MCP_API_KEY | Optional API key for the MCP server |

AGENT_MODEL | Optional model id (default in demo: openai:gpt-4o) |